Robotic Arm Manipulation

The goal of this project was to develop a robotic arm framework that would be able to communicate with an industrial worker, in order to execute a command. The project, was part of the COCOBOTS project of the University of Potsdam, in collaboration with Linagoras, Synergeticon and ANITI.

For this, the goal was to execute a pick and place task, developing a robotic arm manipulation module, which would take as input the Computer Vision (CV) and the Natural Language Processing (NLP) modules. The CV and NLP modules were not designed by me; I only had to integrate them in the framework.

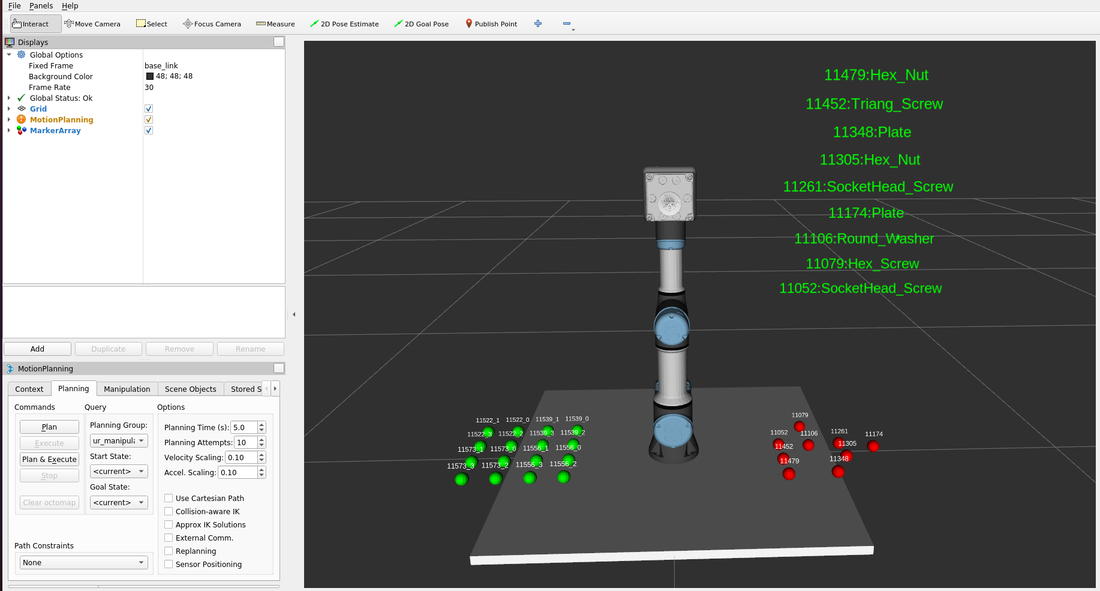

For the robotic arm manipulation module, I used ROS2 in a dockerized environment, under a unified real and simulated robot framework. For the simulation I used Webots. The robot used to carry out the task was a Universal Robot UR3e, coupled with an OnRobot VGC10 suction gripper.

For the simulation, there is a custom yaml file, acting as a spawner, in which the user enters all the objects that wants in the environment to be spawned.

Whereas for the real robot, the objects are detected by the CV module.

The task was to pick industrial-related objects from the pick board, and place them on the place board, on a desired configuration state. For the motion planning and control, I used MoveIt2.

The objects were custom-made specifically for the task, designed by me in Solidworks (CAD and stl) and comprised of screws, nuts, washers, plates and boards.

For the VGC10 gripper, I had to customize the URDF and proto (Webots) files, to simulate the suction gripping behavior both in real and simulation.

For the task planning, I used SMACH library (Finite State Machine), migrated in ROS2.

The result was an API, that takes as input the following commands:

pick object_ID

place hole_ID

and executes accordingly.

The framework matches the object_ID with the type of the object: screws, nuts, washers and plates, and manipulates it accordingly. It also recognizes the stack level on the placing board.

Thus, overall, I used the following hardware:

These software tools:

And these ROS2 libraries:

The following video demonstrates an example of a pick and place series:

For this, the goal was to execute a pick and place task, developing a robotic arm manipulation module, which would take as input the Computer Vision (CV) and the Natural Language Processing (NLP) modules. The CV and NLP modules were not designed by me; I only had to integrate them in the framework.

For the robotic arm manipulation module, I used ROS2 in a dockerized environment, under a unified real and simulated robot framework. For the simulation I used Webots. The robot used to carry out the task was a Universal Robot UR3e, coupled with an OnRobot VGC10 suction gripper.

For the simulation, there is a custom yaml file, acting as a spawner, in which the user enters all the objects that wants in the environment to be spawned.

Whereas for the real robot, the objects are detected by the CV module.

The task was to pick industrial-related objects from the pick board, and place them on the place board, on a desired configuration state. For the motion planning and control, I used MoveIt2.

The objects were custom-made specifically for the task, designed by me in Solidworks (CAD and stl) and comprised of screws, nuts, washers, plates and boards.

For the VGC10 gripper, I had to customize the URDF and proto (Webots) files, to simulate the suction gripping behavior both in real and simulation.

For the task planning, I used SMACH library (Finite State Machine), migrated in ROS2.

The result was an API, that takes as input the following commands:

pick object_ID

place hole_ID

and executes accordingly.

The framework matches the object_ID with the type of the object: screws, nuts, washers and plates, and manipulates it accordingly. It also recognizes the stack level on the placing board.

Thus, overall, I used the following hardware:

- Universal Robot UR3e

- OnRobot VGC10 gripper

- Luxonis OAK-D Lite

These software tools:

- ROS2

- Webots

- Docker

- Ethernet communication

- Solidworks

- Ubuntu 20.04 (NVidia powered)

And these ROS2 libraries:

- MoveIt2 (and pymoveit2)

- UR ROS2 drivers and description

- Webots ROS2 driver

- SMACH (and SMACH visualization)

- OnRobot VGC10 ROS2 drivers (I migrated them from ROS1) and description (customized)

- Luxonis ROS2 drivers

The following video demonstrates an example of a pick and place series: